We cannot undermine the importance of content in this digital age. The magic in the way content works is undeniable. Content writing is what puts your brand on the forefront, by getting the attention of more and more eyeballs. Albeit it is the content that is the heart of everything, there are a few technical issues that keep good content at bay from potential users and viewers. These barriers may not seem very grave at the moment, but they can do some serious damage to the life of your content. Not only that, they might hamper the content writing services that your company offers.

In order to be more careful and level up your game, here is a list of the few technical barriers that are better off avoided.

Avoiding hosting of valuable content on the main site

Websites often choose to host their best content on the main website for a lot of reasons, irrespective of whether it is in subdomains or separate sites. This happens because it is considered easier from a development perspective. What then is the matter with this? Well, if content is not in your main site’s directory, Google won’t treat it as a part of your main site. Links acquired on your digital subdomains will not be handed to the main site in the same way.

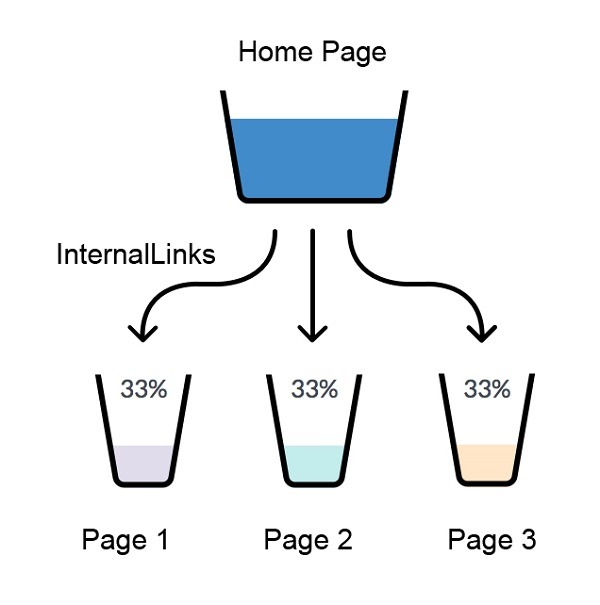

No use of internal links

Google can spider your content better and pass equity between sections of the website in the best way, with the help of internal links. If you have a page with many external links pointing to it, but no outbound internal links, this page will be red hot, but it would be hoarding the equity that could be used elsewhere on the site. Not using internal links can cause some serious damage to your content marketing. Always ensure that relevant links are included between hot pages and key pages.

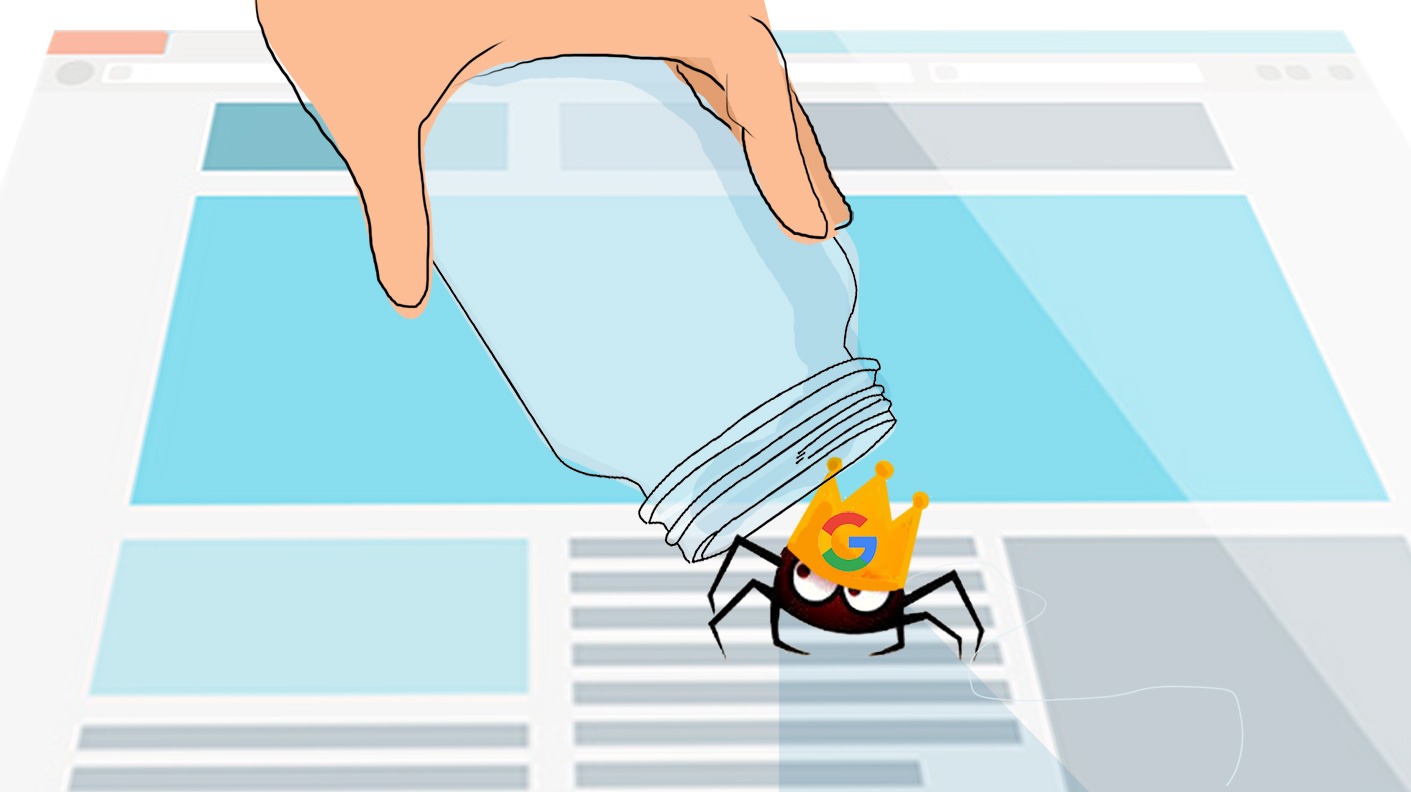

Terrible crawl efficiency

Crawl efficiency is an issue that we come across almost all the time, especially with the larger sites. Google only has a limited amount of pages it will crawl on your site at any one particular time. However, once its budget has been exhausted, it will move on and return at a later date. Google may get stuck crawling unimportant areas of your website, if it comprises of a large amount of URLs. It may also fail to index new content quickly enough. The common cause of this is an unreasonably large amount of query parameters being crawlable.

Too much of thin content

If your website has large amounts of thin content pages, then sprucing up one page on your website with 10x better content is not going to be sufficient to cover for the deficits that your website already has. The Panda algorithm essentially makes a score of your website based upon the amount of exclusive content you have. Your rankings will drop if majority of the pages do not meet the minimum score required to be deemed valuable. You may have to remove content for pages which cannot be improved.

Avoid these technical barriers and you’re sure to ace your content game in a jiffy!